I’m not a behavioural science expert but I do think it provides a useful lens to consider when we’re trying to understand how people make decisions. One of the things I’ve always struggled with however, is how to navigate the long list of cognitive biases (there’s something like 150+ cognitive biases listed on the Wikipedia page).

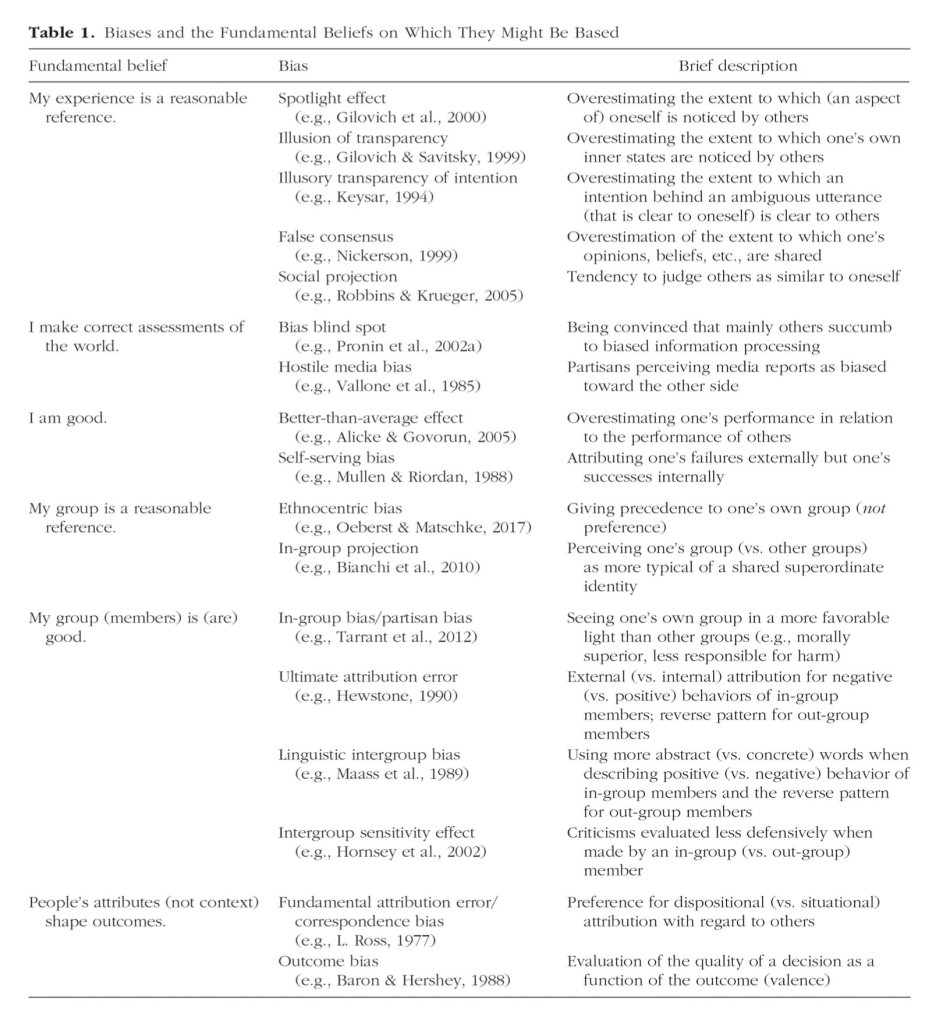

The other week (via @SteveStuWill) I came across a fascinating paper arguing that biases have largely been studied in separate lines of research which has precluded the identification of common principles across different cognitive biases. The paper further posits that many biases can be traced back to ‘a fundamental prior belief and humans’ tendency toward belief-consistent information processing’. In other words it’s possible to group biases together around a core set of basic beliefs (like ‘I’m a good person’, and ‘my experience is a reasonable reference’) and that these are compounded by our tendency to look for information consistent with such a fundamental prior belief: As the abstract says: ‘What varies between different biases is essentially the specific belief that guides information processing’.

What’s interesting about it is how this enables a logical grouping of common biases which (alongside useful frameworks for application like the E.A.S.T framework from the Behavioural Insights Team) can perhaps help us to navigate a complex field. It’s also interesting, I think, the prevalence of what they call ‘belief consistent information processing’ which to me is an indicator of the pervasiveness of confirmation bias (our tendency to focus on information which confirms our existing world view). This is something which I’ve observed often in the work that I do with teams. All of which emphasises the need not just to be aware of the risk that confirmation bias can lead to poor decision-making but also to search out disconfirming information. Which is really what genuine exploration is all about.

One last thought on this is that it’s interesting to consider the role of AI in all this. To what extent can it help us to make decisions that reflect objective data, or conversely to what extent may the design of AI simply end up confirming existing belief systems? I guess we shall see.

Leave a Reply